Abstract

Future lunar missions face severe visual challenges caused by extreme illumination, overexposure, deep shadows, and lack of atmospheric diffusion.

L-AVI introduces a machine-learning-based adaptive vision framework that predicts optimal brightness and contrast parameters directly from lunar imagery and applies correction in real time.

1. Introduction

- Harsh, unfiltered solar illumination

- Sharp, deep shadows

- Reflective regolith overexposure

- Loss of detail in dark regions

These conditions pose serious risks for astronaut navigation, rover autonomy, habitat monitoring, and precision operations.

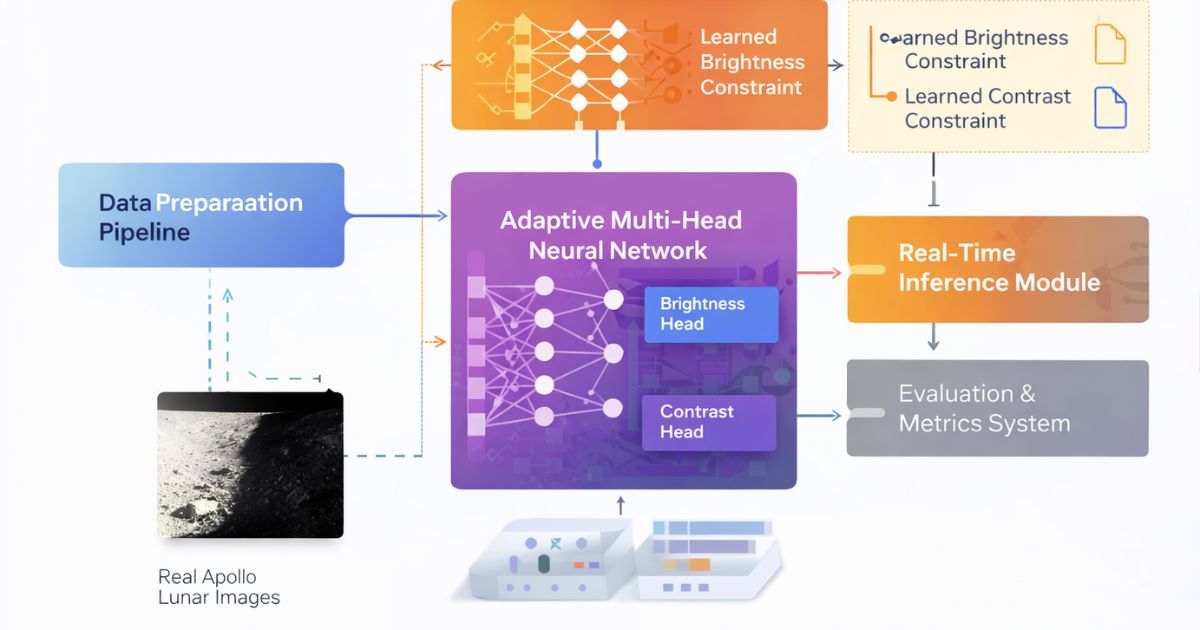

2. System Overview

L-AVI is a modular Python-based framework composed of:

2.5 Mission Signal Flow

A space-grade processing chain that transforms lunar imagery into navigation-ready intelligence.

Lunar Imaging Arrays

Raw frames + sensor metadata

Illumination Predictor

Brightness + shadow inference

Reconstruction Core

Terrain visibility enhancement

Mission Command

Risk overlays & routing

Telemetry Sync

Multi-sensor fusion aligns optical, thermal, and surface data to deliver a consistent lunar map.

- Adaptive exposure balancing

- Terrain hazard scoring

- Signal integrity checks

3. Model Architecture

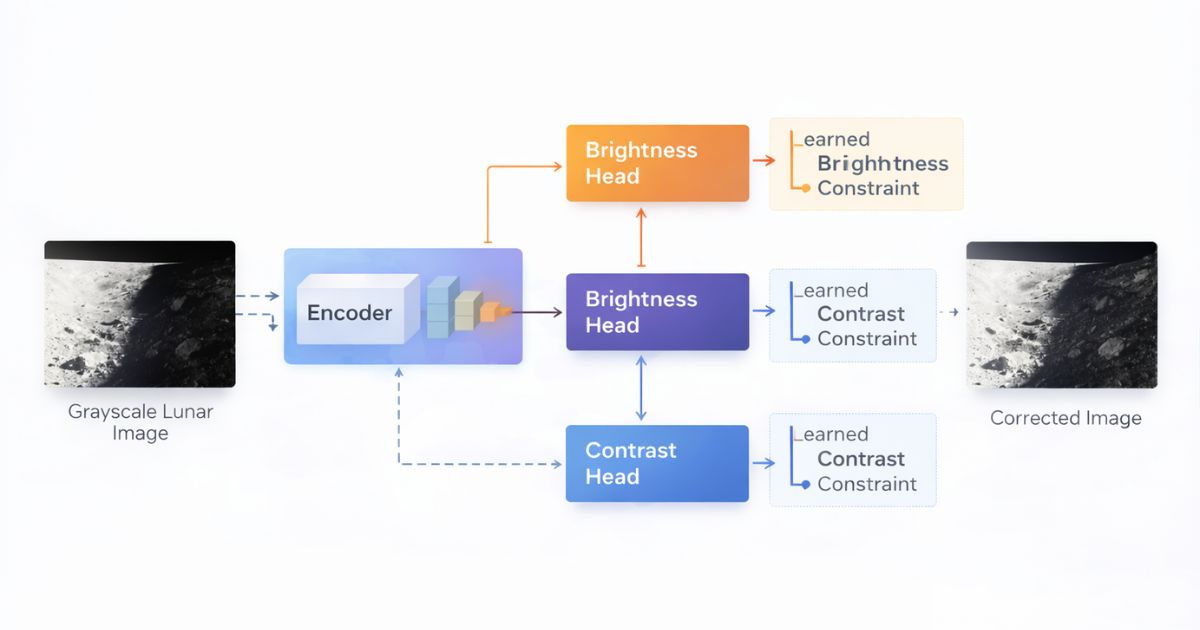

3.1 Encoder

A lightweight convolutional encoder extracts illumination-related features from grayscale lunar imagery.

3.2 Dual Regression Heads

- Brightness Head — global brightness correction

- Contrast Head — global contrast scaling

4. Adaptive Correction Mechanism

This formulation preserves spatial structure, prevents artificial artifacts, and enables controlled real-time correction.

5. Dataset & Preprocessing

- Grayscale normalization

- Intensity scaling

- Synthetic augmentation (planned)

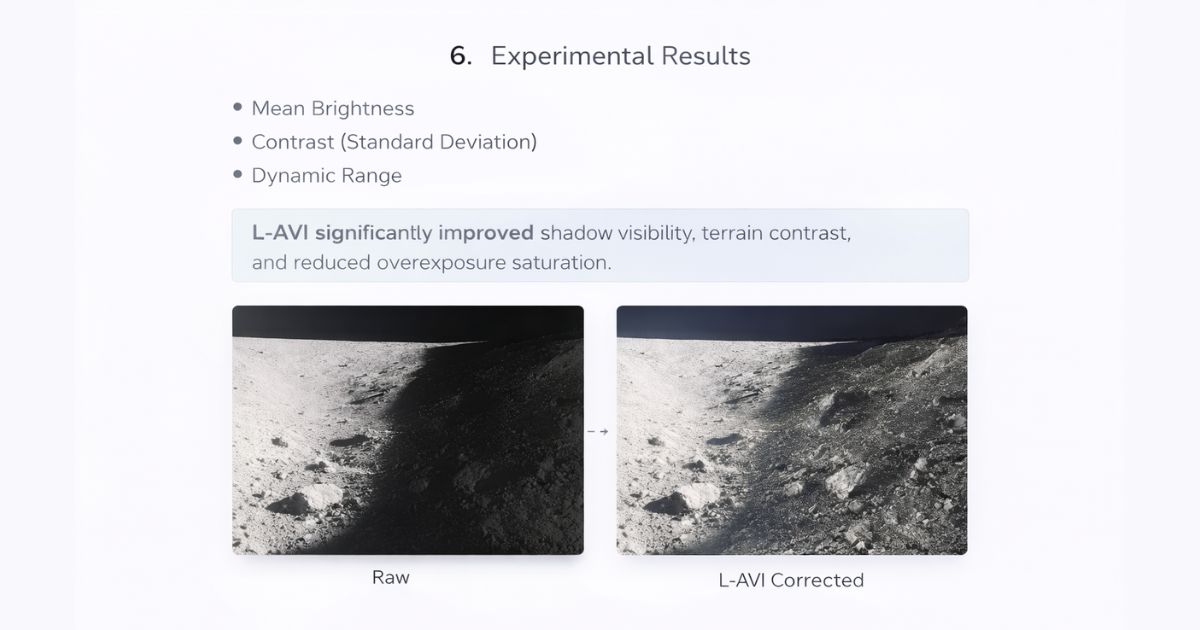

6. Experimental Results

- Mean Brightness

- Contrast (Standard Deviation)

- Dynamic Range

L-AVI significantly improved shadow visibility, terrain contrast, and reduced overexposure saturation.

7. Constellation Signal Map

A visual intelligence overlay that links mission signals, anomaly clusters, and terrain risk zones.

8. Lunar Mission Dashboard

Mission operators view telemetry, reconstruction health, and hazard states in a single command panel.

Telemetry Overview

Surface Scan

Active ridge detected • confidence 0.91

Risk Monitor

- Shadow depthLow

- Terrain slopeModerate

- Thermal driftStable

- Signal noiseNominal

9. Orbital Timeline

Interactive sequence of how L-AVI stabilizes vision across a mission pass.

Orbital Capture

Initial lunar frame ingestion with raw exposure calibration.

10. Applications

8. TRL-4 Demonstration

L-AVI has reached TRL-4 with validated reconstruction pipelines and mission-style testing. This demonstration preview shows the model workflow, performance signals, and simulated mission replay.

The demo focuses on low-light lunar frames, illumination prediction stability, and terrain visibility recovery that supports navigation and safety-critical decision making.

Earth Mirror — Planetary Risk Intelligence

Earth Mirror is a geospatial intelligence platform that transforms satellite imagery into living risk layers for climate, infrastructure, and environmental monitoring.

The system fuses cloud dynamics, terrain indicators, and predictive models to surface early warning signals for decision makers. Built for global situational awareness and rapid response workflows.

11. Future Work

- Region-based adaptive correction

- Video temporal consistency

- Synthetic lunar lighting simulations

- Edge & embedded deployment

- Hands-free system integration

12. Conclusion

L-AVI demonstrates that machine-learning-driven adaptive vision is a practical and scalable solution for extreme lunar illumination.